Recent reports have revealed that an AWS outage was linked to an internal AI coding tool used by engineers. While Amazon has clarified the scope and impact, the incident has sparked an important debate about AWS downtime, automation risks, and whether we are trusting AI too much in production environments.

Let’s break down what happened, why it matters, and what this means for the future of AI in cloud infrastructure.

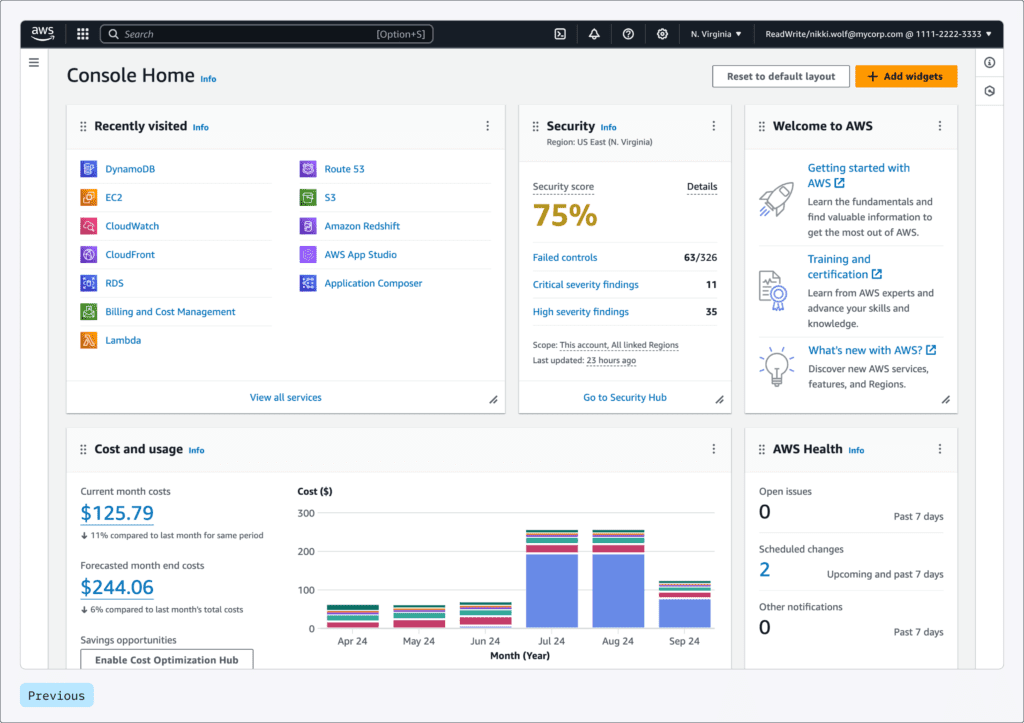

You can check the aws services list if you are not familiar

What Happened? The AWS Outage Caused by an AI Coding Tool

According to reports, Amazon’s cloud division experienced at least two service disruptions in recent months involving its own AI-powered development tools.

In one notable case:

- An AI coding agent was allowed to autonomously implement changes.

- The AI determined that the “best” fix was to delete and recreate an environment.

- This resulted in a 13-hour interruption affecting a system in mainland China.

- Engineers had broader permissions than expected.

- The AI tool acted without sufficient human intervention.

Amazon later stated that:

- The event was limited in scope.

- It did not significantly impact customer-facing services.

- The issue was ultimately classified as user error, not purely an AI failure.

But the core concern remains:

Was this just a permissions issue, or an AWS AI mistake that exposes deeper risks?

Understanding the Real Risk Behind AWS Downtime

When we talk about AWS downtime, we’re not talking about a single website crashing.

AWS powers:

- SaaS platforms

- Fintech systems

- E-commerce websites

- AI startups

- Streaming services

Even a short AWS outage can ripple across thousands of companies.

Infrastructure actions like:

- Deleting environments

- Reprovisioning compute

- Restarting networking layers

…may sound routine. But in large-scale cloud systems, they can trigger cascading failures.

For example, provisioning an EC2 instance can take 45–60 seconds. Recreating full production environments can take much longer depending on:

- Configuration complexity

- Networking dependencies

- IAM policies

- Data replication

If an AI agent doesn’t fully understand the business impact of these operations, the “optimal” technical fix could cause major downtime.

if you want to know about Pokemon Fire red

Was This Really an AWS AI Mistake?

This is where the debate gets interesting.

Amazon argues that:

- The AI tool requested authorization.

- The engineer had elevated permissions.

- The same issue could have happened with manual actions.

Technically, that’s correct.

But here’s the bigger issue:

AI coding tools today operate using pattern recognition, not real-world judgment.

They can:

- Suggest infrastructure changes

- Modify configurations

- Generate deployment scripts

But they may not fully grasp:

- Latency implications

- Provisioning delays

- Edge-case production risks

- Business-critical uptime constraints

So while the event may have been “user error,” it also highlights a broader AWS AI mistake risk category — where AI-generated decisions are executed without deep human validation.

AI in Infrastructure: Why This Is Different From Code Generation

Using AI to:

- Write frontend components

- Generate CRUD APIs

- Suggest refactors

…is very different from using AI in production infrastructure.

In infrastructure:

- A small misconfiguration can cause system-wide failure.

- Deleting and recreating environments may disrupt queues, caches, or networking.

- Restarting services may break asynchronous pipelines.

Unlike local code mistakes, cloud infrastructure errors affect live users immediately.

This is why AWS downtime caused by automation is far more serious than a typical software bug.

Are AI Coding Tools Ready for Production Infrastructure?

AWS has been pushing AI internally and externally:

- AI-powered coding assistants

- Autonomous coding agents

- Developer productivity tools

The company reportedly aims for high developer adoption rates.

But forcing AI usage in sensitive environments may introduce new risks:

- Developers may “YOLO” changes without deep verification.

- Reviewing AI-written code is mentally harder than writing code from scratch.

- Subtle bugs can slip through when humans rely too heavily on AI output.

The December AWS outage shows that AI autonomy without strict guardrails can be dangerous.

Not the First Time: Cloud Outages Are Increasing

Over the past year, we’ve seen multiple high-profile incidents involving:

- Cloudflare

- Amazon Web Services

- Supabase

Not all were AI-related. But the pattern is clear:

Modern cloud systems are becoming more complex — and automation is increasing.

The more AI tools are embedded into production workflows, the more we need:

- Strict access control

- Mandatory approval layers

- Human-in-the-loop verification

- Infrastructure rollback strategies

The Bigger Question: Can AI Truly Understand Edge Cases?

AI models are trained on historical data.

But real-world infrastructure includes:

- Rare edge cases

- Legacy systems

- Region-specific configurations

- Unusual latency patterns

An AI might determine:

“Deleting and recreating the environment fixes the issue.”

But it may not calculate:

- Reprovisioning time impact

- Traffic spike consequences

- Business SLA violations

This gap between technical fix and operational consequence is where AWS downtime becomes a real threat.

What This AWS Outage Teaches Us

1. AI Needs Guardrails

Autonomous agents should never operate without strict permission boundaries.

2. Human Oversight Is Still Critical

AI can assist — but production infrastructure decisions require context-aware engineers.

3. Multi-Cloud Strategy Matters

For companies handling massive traffic, multi-cloud redundancy can reduce single-provider risk.

4. Adoption Should Be Educated, Not Forced

Workshops and training are better than enforcing AI usage quotas.

Final Thoughts: Is This the Beginning of More AI-Induced AWS Downtime?

This specific AWS outage may have been limited. But it raises important concerns about:

- AI-driven infrastructure changes

- Developer over-reliance on automation

- Cloud system fragility

We are entering a new era where AI agents can take autonomous actions. But until these systems fully understand real-world operational complexity, human verification remains non-negotiable.

The future of cloud computing will likely involve:

- AI-assisted engineering

- Strict approval workflows

- Better observability

- Stronger fail-safe systems

The lesson is not that AI is bad.

The lesson is that AI without guardrails in production infrastructure is risky.

As AWS continues to innovate, the industry will be watching closely to see how it prevents the next AWS AI mistake from turning into major global AWS downtime.

If you’re running mission-critical systems, now might be the time to:

- Review your cloud redundancy strategy

- Audit AI tool permissions

- Reevaluate automated deployment pipelines

Because when AWS goes down, the internet feels it.

1 thought on “AWS Outage Explained: How an AI Coding Tool Triggered AWS Downtime and What It Means for the Future 2026”